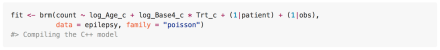

As you probably know, I’m a big fan of R’s brms package, available from CRAN. In case you haven’t heard of it, brms is an R package by Paul-Christian Buerkner that implements Bayesian regression of all types using an extension of R’s formula specification that will be familiar to users of lm, glm, and lmer. Under the hood, it translates the formula into Stan code, Stan translates this to C++, your system’s C++ compiler is used to compile the result and it’s run.

brms is impressive in its own right. But also impressive is how it continues to add capabilities and the breadth of Buerkner’s vision for it. I last posted something way back on version 0.8, when brms gained the ability to do non-linear regression, but now we’re up to version 1.1, with 1.2 around the corner. What’s been added since 0.8, you may ask? Here are a few highlights: